Claude Code adoption in Singapore: search interest 2.6x in May 2026, what SG SMEs should actually know

i pulled the singapore google trends curve for "claude" yesterday and the latest weekly value is 71 out of 100 against a twelve-month mean of 27.1 — a 2.6x lift in raw search interest. the top rising related queries under that seed are "claude cowork" (197,000% growth, flagged breakout), "what is claude code" (166,050%, breakout), "claude code cli" (131,950%, breakout), "claude code skills" (98,850%) and "claude opus 4.6" (84,200%). "claude code subscription" sits at 43,700%. these are the kind of growth-rates google trends only assigns when something has gone from near-zero search volume in singapore to actually-being-asked-about, in a country where the previous baseline assumption was that nobody outside the developer bubble knew or cared.

so something shifted in the last four to six weeks in singapore. and the questions people are typing are very specific. price. what is it. how do i use it from a terminal. can it stand in for a coworker. those are not idle "interesting tech news" queries. those are evaluation queries from people considering whether to spend money or change a workflow.

i use claude code daily. it is the primary coding agent on my own machine, has been for about eleven months, and i have used it on every paid client engagement i have run since mid-2025. i am not a neutral observer. but i have also watched enough sg sme founders evaluate it badly to want to write the honest version of what it is, what it actually costs in singapore dollars, and where the case for and against breaks down.

what claude code actually is

claude code is anthropic's official command-line tool that wraps the claude model family in a coding-focused agent loop. you install it via npm, type claude in any terminal inside any project directory, and you get a chat-shaped interface where the model can read your files, edit them, run shell commands, search the web, and ask you for permission before doing anything that changes state on your machine.

that is the easy explanation. the harder explanation, which matters for anyone evaluating it against the alternatives, is that claude code is three things at once.

one. it is a model wrapper. the underlying engine is whichever claude model you have configured — opus 4.7, sonnet 4.6, the long-context variants. anthropic ships frequent improvements to the model independently of the cli; you mostly do not have to think about that.

two. it is an agent loop. the cli does not just send your prompt and stream a reply. it runs a multi-turn loop where the model decides which tools to call (read this file, run this test, search for that string), waits for the tool to return, reasons over the output, and decides what to do next. you can intervene at any step. for non-trivial tasks the loop runs for thirty seconds to several minutes, doing real work without you typing.

three. it is a skills and memory system. claude code reads a CLAUDE.md file in the project root (and a global one in your home directory) for persistent context, and it supports the model context protocol — the open spec anthropic published for letting any tool plug into any agent. you can give it skills (small markdown files that teach it how to do specific tasks well — think "how we deploy", "how we write postgres migrations", "how this codebase handles auth") and they get loaded automatically when relevant.

the difference between claude code and a chat-style assistant like chatgpt or claude.ai is that claude code lives inside your terminal, has read-write access to your repo, can run commands, and is opinionated about engineering work specifically. it is not trying to be a friendly general-purpose assistant. it is trying to be a contractor.

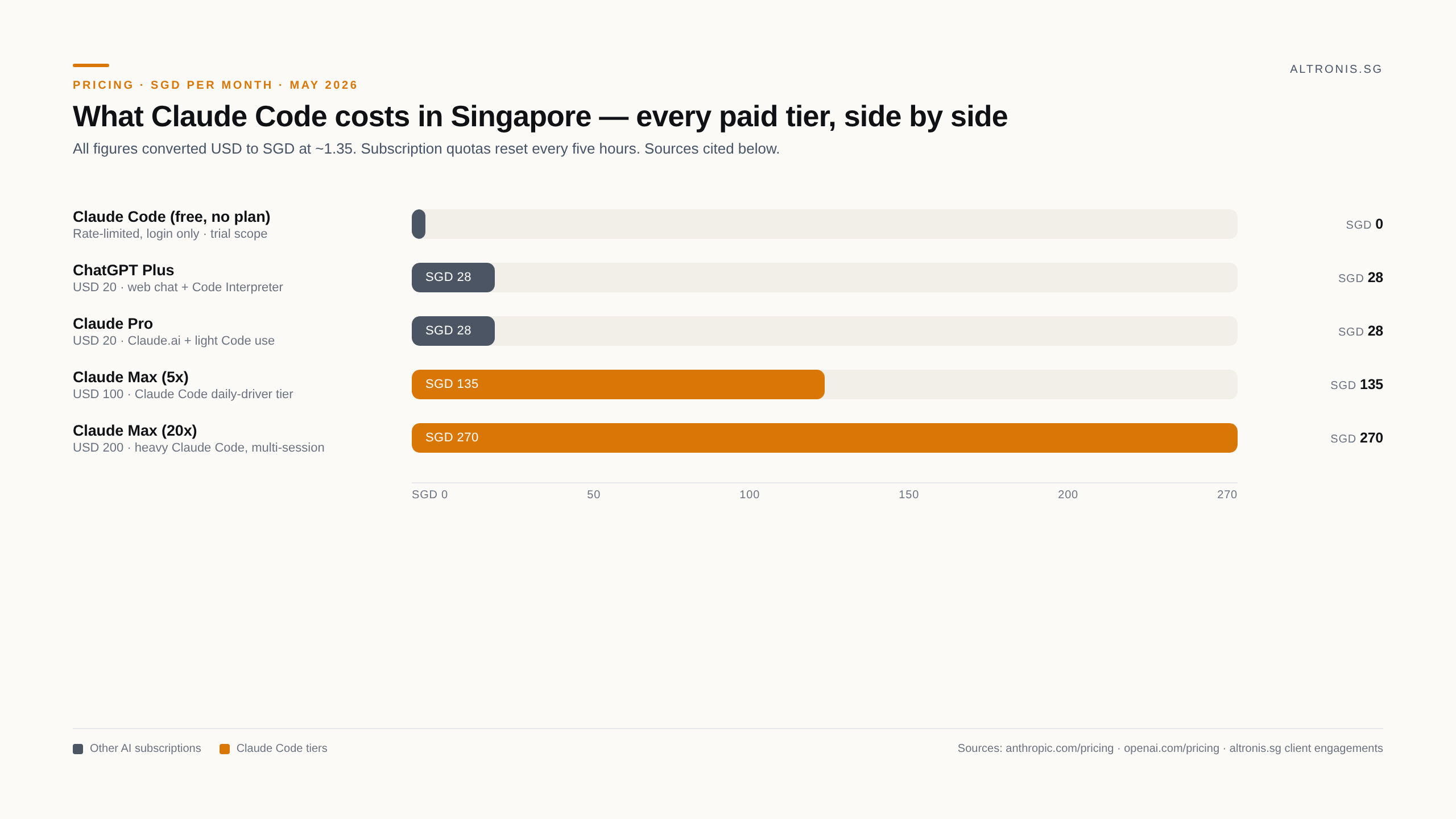

what it actually costs in singapore dollars

the rising-related-query "claude code price" is doing a lot of work in the trend data, because the answer has shifted twice in the last six months and i think most people who type that query are getting outdated answers from older blog posts.

as of may 2026 there are three ways to pay for claude code from singapore.

api pay-as-you-go. you bring an anthropic api key, claude code uses it directly, and you pay per million input/output tokens metered to that key. for opus 4.7 (current top model) the rate is usd 5 per million input and usd 25 per million output tokens — roughly sgd 7 / sgd 34. for sonnet 4.6 it is usd 3 / usd 15 per million tokens. a serious coding session against a real repo runs anywhere from two to ten million tokens depending on how much context the model loads. so a single deep opus session is anywhere from sgd 10 to sgd 70 in raw api spend, unpredictable for a budget owner. (anthropic dropped opus pricing 3x in late 2025 — older blog posts still quoting usd 15 / usd 75 per million are working off the opus 4.1 numbers.)

claude pro / max subscription. usd 20/month gets you claude pro, usd 100/month gets you max. both include claude code usage with a quota that resets every five hours. for solo developers and small teams this is the rational choice. usd 100 a month works out to roughly sgd 135 inclusive of card fx markup, which is what i pay personally. for the volume of work i do on it daily, that is well below what api spend would cost on the same usage shape.

team / enterprise plans. seat-based pricing for teams that want sso, audit logs, central billing, and shared usage pools. team standard runs usd 25 / seat / month (or usd 20 / seat if billed annually), and team premium runs usd 125 / seat / month (usd 100 / seat annually). enterprise starts at usd 20 / seat with usage scaling on top. for a five-or-six person sg ops team that wants licensed access without each member juggling a personal subscription, team standard is the rational entry point.

the right way to read these numbers, especially on the max tier, is "what does it cost to give a senior engineer an extra pair of hands for one project". sgd 135 a month is rounding-error against any sg sme's other engineering tooling spend. it does not buy you an engineer; it buys an existing engineer the ability to ship more of the work they are already doing.

"what is claude code" — the question behind the question

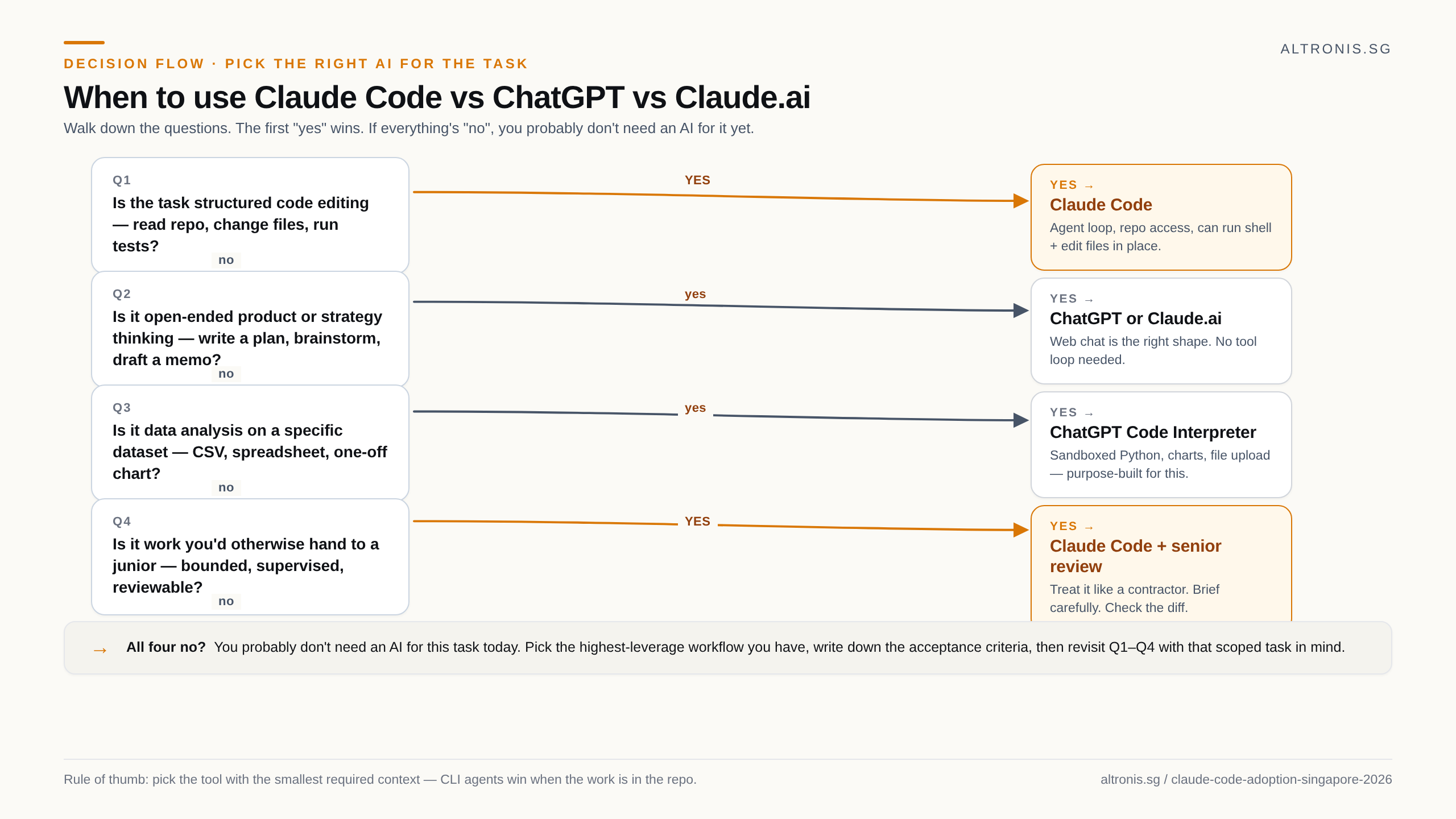

that one is the second-largest rising query and it is the one i think is most worth taking seriously. when somebody types it, they almost always already have one of two implicit comparisons in mind.

the first comparison is to chatgpt. they have heard claude code does coding well, they currently use chatgpt for code questions, and they want to know whether to switch. the honest answer for that audience is that the cli + agent-loop shape changes the value enough that it is worth the switch for any task that touches more than one file or runs longer than one prompt-response. for a one-shot "here is my code, what is wrong" question, both are roughly equivalent and chatgpt's web ui is slightly nicer. for "go through this codebase, find all the places that read from the old api, migrate them to the new one, run the tests, fix anything broken", claude code does that in one session and chatgpt cannot.

the second comparison is to github copilot. copilot is a code-completion tool that lives inside your editor and proposes the next few lines as you type. claude code is an agent that takes a goal and executes it. they do different things and people who know one well frequently struggle to evaluate the other. if your work is mostly typing new code and you want speed-of-typing improvements, copilot is the answer. if your work involves running existing code, debugging, refactoring, integration, deployment scripts, or any task with multiple steps, claude code is the answer. most real engineering work is the second shape, not the first.

the "claude skill" and "claude code cli" rising queries

those two are the give-away that the people searching are not just curious — they are doing evaluation work. nobody types "claude code cli" as a casual query. you type that when you have decided to install it and you want the install command. nobody types "claude skill" without already understanding that claude has a skills system; that is a query somebody makes when they have heard about the agent skills feature anthropic shipped late 2025 and they are figuring out whether to write their own.

this matters because it tells you the trend is not curiosity-shaped, it is implementation-shaped. people are committing to use the tool and they are looking up specific things mid-install. that pattern in google trends usually precedes a noticeable jump in actual production usage by about eight to twelve weeks. if the curve holds, claude code is going from "engineer hobby tool" to "team tool that procurement asks about" in singapore some time in q3 2026.

what kind of work claude code is actually good at

the easy demos make it look like a one-person product team. the reality, from eleven months of daily use on real client work, is narrower and more useful than that.

it is excellent at: bounded code edits with clear acceptance criteria. refactors against an existing codebase. writing and running tests against a known shape. translating shell-script-shaped problems into proper code. one-off automations that touch your repo. exploratory data analysis when your data lives in files. anything where the output is text or code and a human can review the diff before it ships.

it is not great at: open-ended product or strategy thinking, where you do not yet know what you want. work that needs deep customer or business context the model does not have. tasks where the cost of a wrong answer is high and you cannot easily reverse it. anything where the value is in the conversation rather than the artifact.

the realistic framing for a sg sme considering claude code in mid-2026, in my view:

- if you already have one or two engineers, get them claude max each. the productivity lift is real and the monthly cost is small enough not to matter. ship the internal tools that have been on the backlog, automate ops scripts, refactor the code you have always wanted to refactor.

- if you have a small ops or analyst team without engineers, claude code is genuinely useful for data exploration, one-off scripts, internal automations, and document-processing pipelines. it does not replace engineering judgement on customer-facing code, but it removes a lot of the friction on internal tooling work.

- if you are evaluating it for a regulated or high-stakes domain, treat it the way you would treat any new contractor — start with read-only or low-blast-radius work, build trust, and gate the high-stakes work behind explicit human review until you have your own data on how it performs against your codebase.

what to actually do this week if you are a sg sme owner reading the trend curve

three small steps that cost almost nothing.

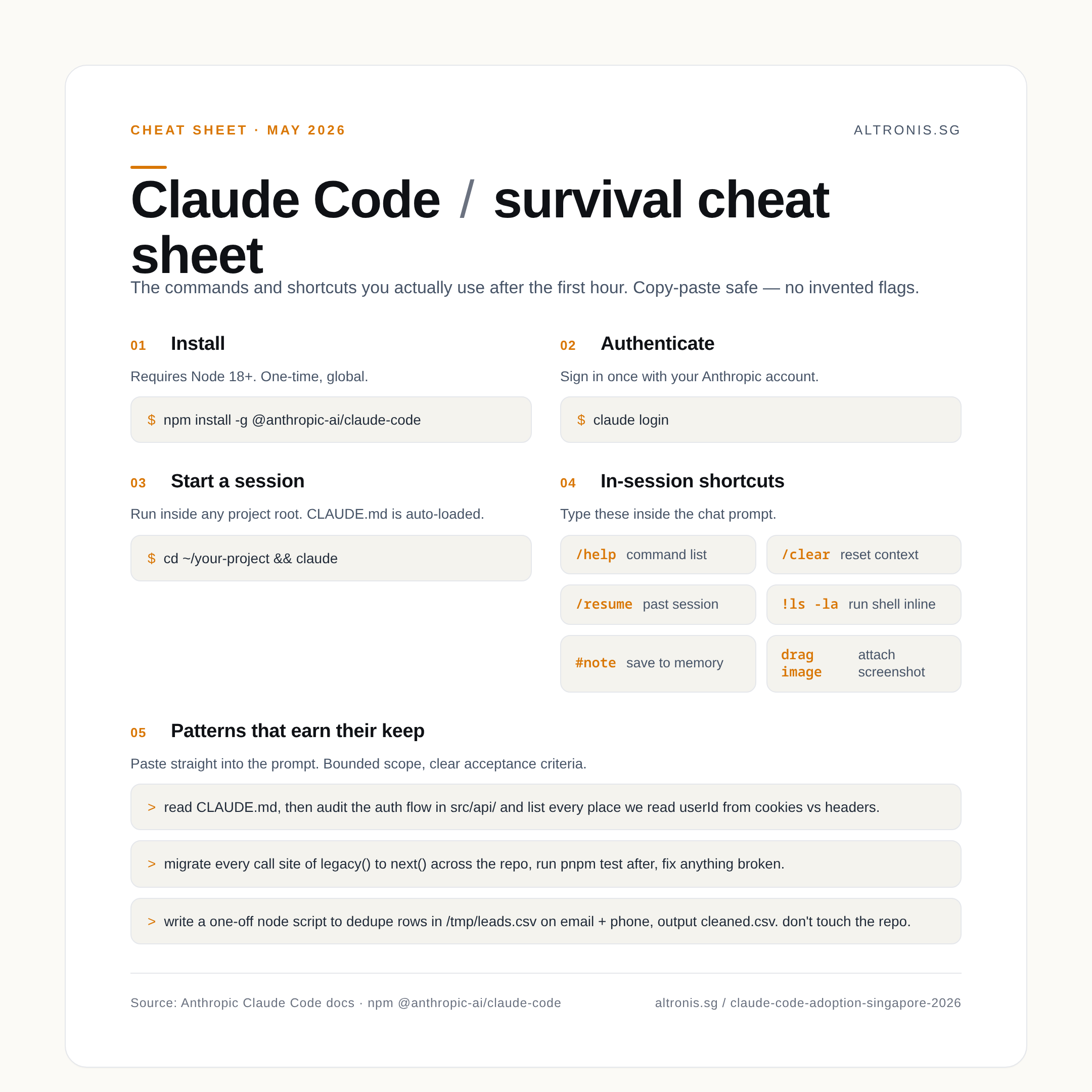

one. install it on your own machine. the cli is one command — npm install -g @anthropic-ai/claude-code, then sign in with your anthropic account. spend an afternoon pointing it at a real internal tool you have always wanted to fix. you will learn more about whether it fits your team in two hours of hands-on use than in a month of reading about it.

two. set up a CLAUDE.md file at the root of your repo that describes the project, the conventions, the things that have bitten previous engineers. that one file is the single biggest quality lever for claude code's output and most people skip it because it sounds obvious.

three. write down the three or four tasks in your business that look like the right shape for an agent: bounded scope, clear acceptance criteria, fail-safe (you can throw away the work if the output is bad), no customer impact during the run. those are your candidate workflows. test on those first; do not throw the agent at your auth code on day one.

the search-trend curve is doing what every tools-adoption curve does. there is a cohort six to twelve months ahead of the public-awareness curve, then a cohort that catches up, then a long tail. if you are in singapore and reading this in may 2026, you are in the middle cohort. that is a good place to be — late enough that the rough edges are sanded, early enough that there is real lift left.

i will write a follow-up in three to four months on what changed. by then there will be specific numbers on cost-per-task in production sg sme settings, and the team-tier pricing comparison against having an outsourced offshore developer (which is what most sg smes are actually deciding between today). until then — install it, use it on something small, decide for yourself.